Rows: 4,608

Columns: 12

$ subject <dbl> 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1…

$ verb <chr> "pet", "organize", "burn", "stack", "toast", "need", "cut"…

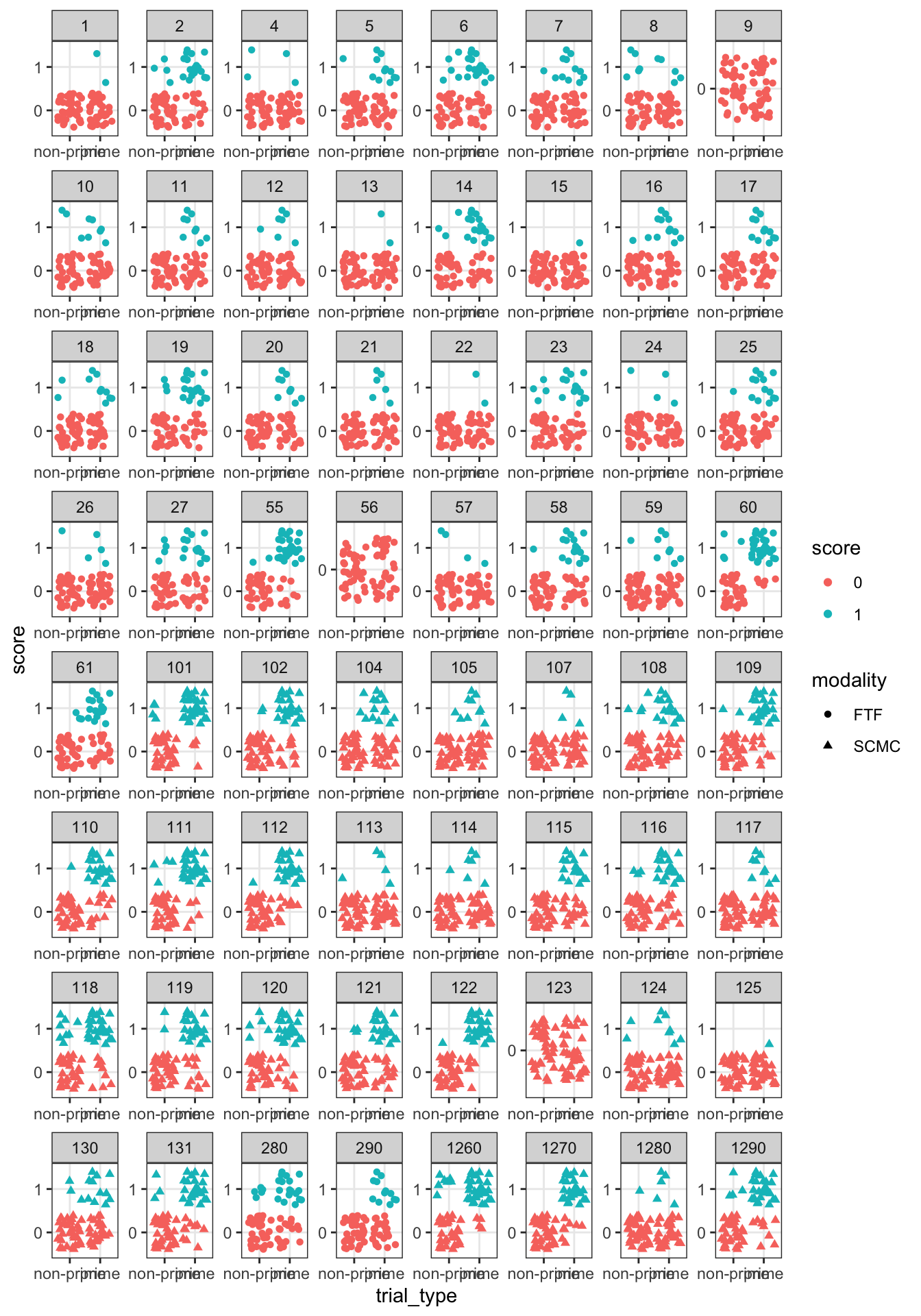

$ score <fct> 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0…

$ modality <chr> "FTF", "FTF", "FTF", "FTF", "FTF", "FTF", "FTF", "FTF", "F…

$ test <chr> "priming1", "priming1", "priming1", "priming1", "priming1"…

$ trial_order <dbl> 12, 9, 10, 11, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23,…

$ session <chr> "session1", "session1", "session1", "session1", "session1"…

$ trial_type <chr> "non-prime", "prime", "non-prime", "prime", "prime", "non-…

$ cloze <dbl> 40, 40, 40, 40, 40, 40, 40, 40, 40, 40, 40, 40, 40, 40, 40…

$ wmc <dbl> 74, 74, 74, 74, 74, 74, 74, 74, 74, 74, 74, 74, 74, 74, 74…

$ prod_pre <dbl> 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0…

$ rec_pre <dbl> 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6, 6…